It's all Garbage!

Why Your AI Strategy Is Burning Millions on a Data Clean-Up That Will Never Happen

This title will probably earn me a few groans, raise a few eyebrows, and draw more than a little pushback.

For decades, the technology sector has hidden behind the comfortable old shield of “garbage in, garbage out”. It’s the ultimate get-out-of-jail-free card for failed projects, bad reporting, and broken software. In the era of Generative AI, I’m seeing the exact same defensive playbook being deployed, but with a modern twist: organizations are either slow-playing their AI strategies in a desperate, multi-year scramble to get their data “perfectly clean” first, or they are throwing in the towel and blaming “bad data” the second a model hallucinates.

It’s all over tech social media, and it’s all garbage.

Let’s stop the hand-wringing. It is not the data itself that is the fundamental problem here, and it is lazy to blame a probabilistic system for messing up. Especially when you aren’t controlling that system the way an enterprise team should.

The real world is not a clean database schema; it is an inherently messy, unstructured dump of documents, emails, spreadsheets, and legacy systems. If your AI strategy relies on waiting until your data is pristine, your competition is going to leap so far ahead of you that you’ll never catch up. The breakthrough isn’t in fixing the garbage before you start; it’s in building an architecture that actually knows how to govern and synthesize the mess during inferencing.

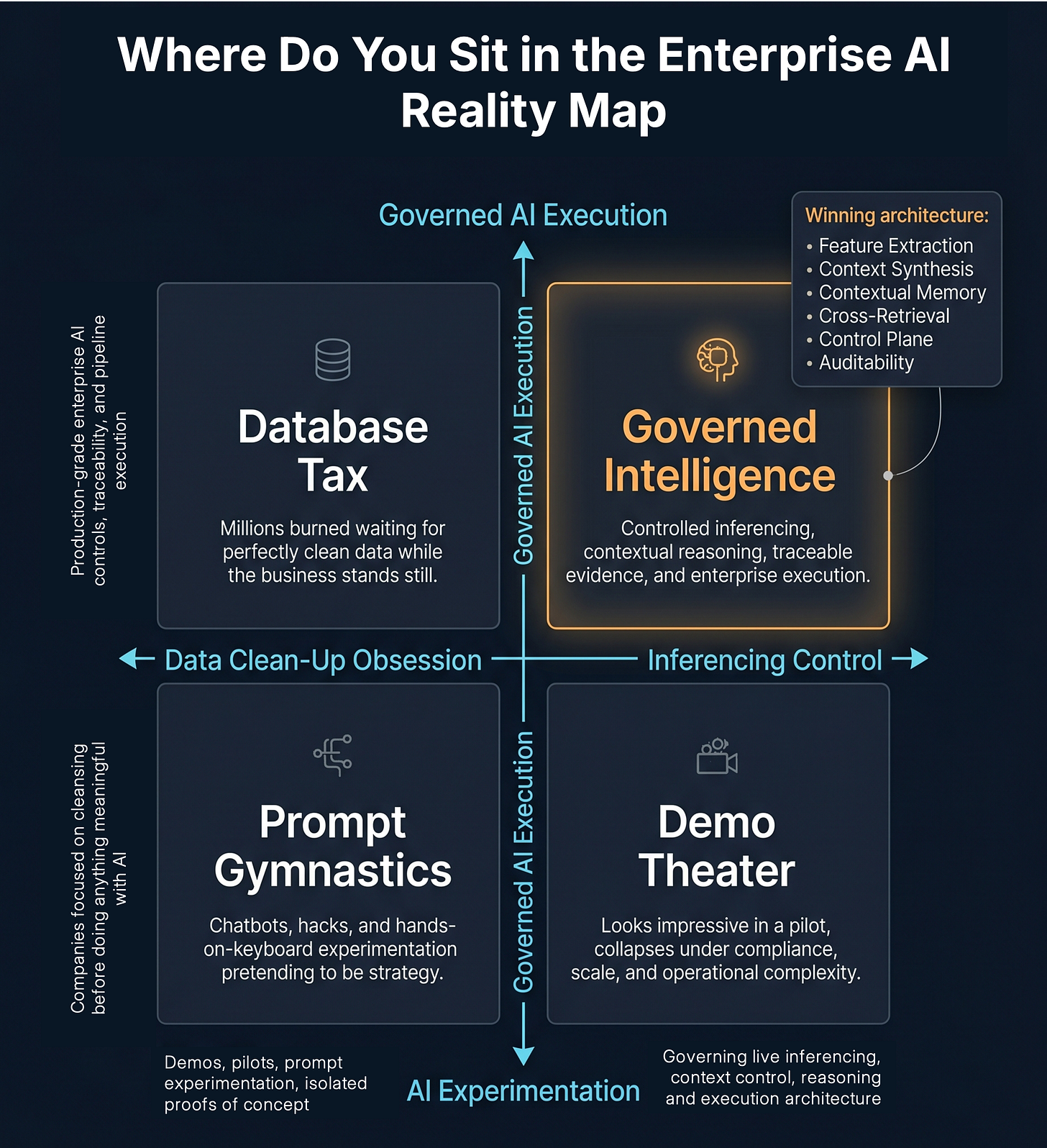

The enterprise AI market is quickly sorting into four camps. Only one is built for production.

The Myth of Enterprise “Structure”

If you listen to the data purists and database engineers, they will yell at you about the absolute necessity of structured data. They’ll tell you that until everything is perfectly normalized, mapped, and stored in a clean relational database, you have no business deploying advanced AI.

But anyone who has spent thirty years in the enterprise software trenches knows the dirty secret of corporate IT: truly consistent structured data rarely exists across the enterprise at scale.

Structure is merely a highly localized version of the truth sitting inside a single, isolated silo. The absolute instant you try to move data from one silo to the next, your beautiful, fragile structure begins to fracture. By the time you try to connect three or four different systems, the entire schema breaks down, and you are immediately dropped back into the painful world of semi-structured data where mapping and normalization turn into a multi-million-dollar nightmare. Anyone who has survived an ERP migration knows exactly what this looks like and why those projects routinely drag on for years.

In the real world, 99% of business is noise, and almost all of the high-value information that actually runs a company is locked away in unstructured formats. It sits in contracts, emails, pitch books, compliance filings, and PDFs. This is where business actually happens.

If your AI strategy requires converting all of that fluid, conversational business logic into rigid databases before a model can touch it, you have already lost the battle.

The Repetition Trap: Why Mimicry is Easy, but Architecture is Hard

We see the breathless headlines about AI passing bar exams, writing code, or generating stunning images, and we mistake sophisticated pattern matching for actual intelligence. But why is AI so good at coding, breaking code, understanding images, and writing standard documents? The answer is simple: repetition and rigid guardrails.

Code is inherently structured, highly patterned, and backed by billions of public examples. For a statistical processor, replicating or even breaking code is trivial; it’s just rinsing and repeating patterns all day long. The same goes for standard white-hat hacking scripts, basic image recognition, or drafting boilerplate prose. There are millions of pre-existing templates to copy.

But get that model to design a novel system architecture, think outside the established box, or navigate the highly nuanced, shifting reality of your specific business workflows? That is a completely different story.

Real-world business doesn’t have a standardized, public repository to copy from. When you throw a raw model at these complex environments, it fails to capture the actual signal because the underlying structure isn’t there to guide it. Instead, you end up with a human standing at the helm—prompting, reprompting, adjusting, correcting, and constantly babysitting the output just to get something usable. It is an exhausting, tiresome loop of prompt gymnastics masquerading as intelligence, and frankly, it is a terrible way to run an enterprise.

The Stateless Interpreter in a Messy World

To build a real solution, we have to start by stripping away the magic and looking at what LLMs actually are. They are not omniscient oracles or cognitive databases; they are stateless processors and linguistic interpreters. They have no memory of your business, your policies, or your operational history. They simply react to whatever text strings you shove into their context window at the exact moment of inferencing.

If you feed this stateless engine a chaotic, noisy pile of data, or try to guide its behavior with a fragile, fifty-page system prompt, you are entirely at its mercy. It will happily hallucinate a beautifully formatted, highly authoritative lie right out of the digital landfill you handed it—which is exactly how most generic “chat-with-your-data” solutions operate today.

This brings us to the critical distinction that the industry constantly glosses over: the difference between training and inferencing. The real, high-stakes battleground for enterprise AI is not in training. Let’s leave training to the backroom science and analysts; it is an incredibly expensive, slow-moving process designed simply to teach a model vocabulary and broad statistical patterns.

Inferencing, however, is where the rubber meets the road. It is the live execution path, and it is where your software architecture actually has to step up. During inferencing, your system must be sophisticated enough to actively deconstruct the messy unstructured and semi-structured world, extract the actual signals, and present them to the model as clean, isolated, and unambiguous reasoning components. If you just dump a raw, unparsed document or a lazy keyword-search chunk into the model, you will get sophisticated garbage back. But if you architect a layer that deconstructs that data into its core logical elements first, you can finally drive deterministic reasoning out of a probabilistic engine.

Why RAG and Graph RAG are Cracking Under Pressure

To solve this inferencing problem, the industry rushed to adopt Retrieval-Augmented Generation (RAG). But standard RAG is an incredibly blunt instrument. It relies on semantic similarity, slicing documents into arbitrary chunks and hoping that a basic mathematical match brings back the right context. It frequently fails because it completely lacks an understanding of document hierarchy, metadata, or the actual business relationships embedded within the text.

To fix this, everyone is now hyping “Graph RAG” as the ultimate savior. But let’s be honest: Graph RAG has its place, but in dynamic enterprise environments it often becomes brittle, expensive, and difficult to maintain. Graph databases require you to pre-define schemas and relationships. The moment your business requirements change, or a new type of unstructured document is introduced, your static graph starts to break down. Graph RAG still struggles to handle complex, ever-shifting context and relationships across an enterprise’s changing daily workflows.

What the enterprise actually needs is an on-demand, dynamic ontology—a system that can construct relationships, trace lineages, and synthesize context on the fly, tailored to the exact question being asked at that exact second.

Engineering the Solution: Real Time Context Synthesis

If your reaction to the standard Graph RAG pipeline is a heavy sigh, you aren’t alone. That visceral dread is the mark of an actual engineer who has survived real-world production systems. It’s exactly why we built a completely different path.

At Charli AI, we realized early on that trying to force a raw LLM to process unstructured enterprise files and hoping the “vibe” holds is an architectural dead end. You need a dedicated, sovereign layer sitting squarely between your chaotic corporate data and the reasoning model. We bypassed the limitations of basic RAG, the brittle nightmare of static Graph RAG, and the eye-watering expense of pre-tuning pipelines by engineering a real-time execution layer built for a dynamic world.

The Real-Time Context Synthesis Engine: Instead of lazily slicing text and tossing raw chunks at a model, this engine actively deconstructs unstructured documents, emails, spreadsheets, tables, charts, graphs, and narratives into their fundamental semantic and logical primitives in real time. This is where high-performance Feature Extraction meets deep Feature Contextualization on the fly. It doesn’t rely on a static, pre-computed map of the world. It strips away ambient noise, dynamically resolves context, and enriches the underlying data so the model receives signal instead of sludge.

Contextual Memory Architecture: Once synthesized, this intelligence is captured in a fluid, dynamic memory layer. When the system needs to evaluate a complex transaction, audit a compliance record, or cross-reference a contract, the architecture serves up the contextually relevant evidence required for reasoning. This memory is not a static dump used to stuff a prompt before hitting a stateless LLM; it is a live, structured resource that guides, constrains, and validates the model’s multi-step reasoning plans.

Contextual Cross-Retrieval: This is the critical architectural linchpin. It dynamically fetches the precise, cross-silo details required to navigate complex reasoning paths. By intercepting the data flow and isolating the actual signal from the surrounding noise before it ever reaches the LLM, we materially reduce prompt contamination by controlling what reaches the model in the first place. The model doesn’t have to guess, compromise, or filter out corporate chatter; it is handed validated, traceable facts.

The practical result of this architecture is that during live inferencing, your reasoning engine is never drowning in enterprise noise. It isn’t trying to parse half-baked context, struggle against a broken database schema, or hallucinate a bridge between isolated silos. Instead, the model is fed a highly curated, fully traceable, and completely clean stream of synthesized context. You get controlled, traceable, reliable enterprise execution—without the multi-million-dollar database tax.

The Billion-Dollar Risk Sitting in the Chaos

Here’s the kicker: that chaos, the same so-called garbage everyone wants to clean up later, contains the crown jewels of your organization.

Customer data buried in proposals, contracts, agreements, and transactions. Privacy land mines sitting inside emails. Pricing, strategy, forecasts, and board-level thinking tucked away in presentations. Sensitive information hiding in audio files, video recordings, shared drives, cloud folders, and laptops across the company.

AI has opened a fascinating new frontier, but it has also exposed a glaring risk. Firewalls no longer contain the problem. In the age of AI, you are now funnelling private, confidential, and strategically sensitive information through a powerful reasoning layer that most organizations barely control.

That is not just an architecture issue. It is a liability issue.

Unless you get control over what the industry calls dark data, you are not sitting on an untapped asset. You are sitting on a risk buried inside the balance sheet.

Stop Waiting, Start Governing

The era of deploying raw models and hoping a clever system prompt will keep them on the rails is officially over. If you are sitting on the sidelines waiting for a multi-year data-cleaning project to finish before executing your AI strategy, you have already lost. The true first-mover advantage in enterprise AI is not going to the companies with the cleanest legacy databases, nor is it going to those spinning up flashy proof-of-concept demos that collapse the moment they hit compliance or security review.

The winners will be the organizations that accept the chaotic reality of a messy, unstructured world and build the actual engineering controls required to handle it. Those controls cannot be coaxed out of a model with clever prose; they require disciplined architecture, strict deterministic guardrails, and rigorous execution.

“Garbage-in, garbage-out” is only an inevitability if you have zero control over your execution path. When you build an architecture that actively deconstructs the mess, synthesizes context in real-time, and enforces strict, predictable boundaries during inferencing, AI stops being a guessing machine. It becomes a reliable, governed intelligence engine that can actually survive production in the real world.

Even quantum computing, the supposed frontier of computational magic, keeps running into a brutally practical problem: preserving the information state long enough to do something useful. Enterprise AI has the same ugly little problem, just with more PDFs, spreadsheets, policy documents, and corporate folklore.

That is why Feature Extraction, Feature Contextualization, and Contextual Cross-Retrieval matter. They are not cosmetic enhancements around a model. They are the control architecture that preserves context, isolates signal, and gives the reasoning engine a reliable information state to operate against.

It is time to stop using the messy nature of enterprise data as an excuse for lazy engineering. Stop waiting for the perfect database schema, stop relying on the illusion of prompt engineering, and start building the control plane.